TL;DR

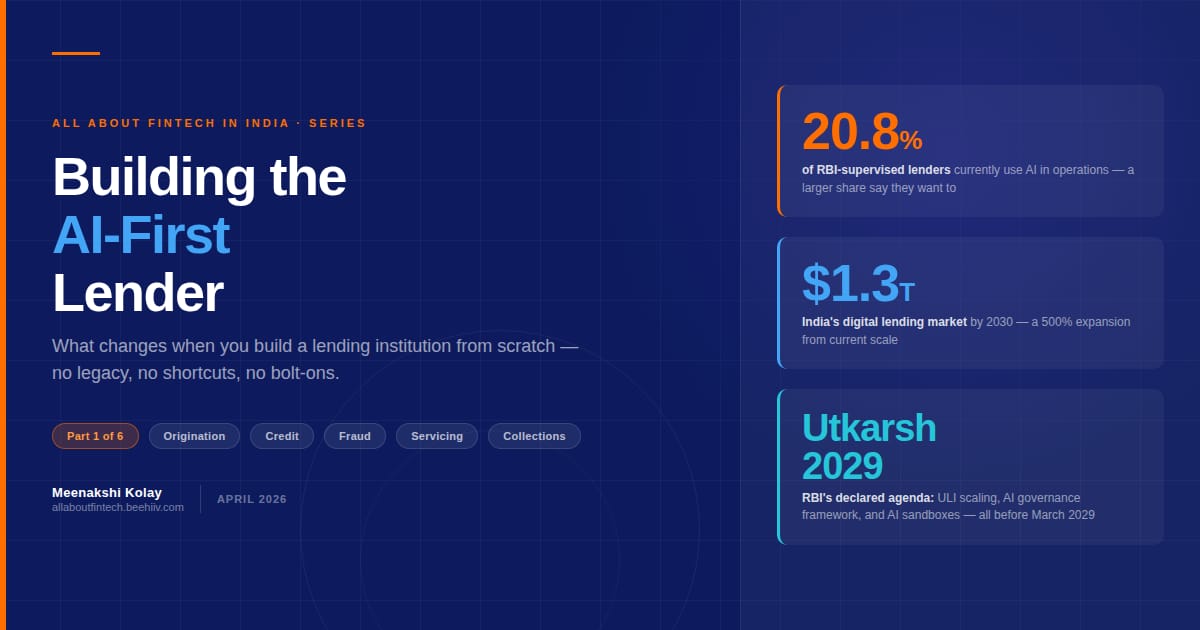

Only 20.8% of RBI-supervised lenders currently use AI in their operations while a larger share say they want to.

The gap between intent and architecture is the real story.

India's lending stack runs on decades old core banking systems designed for branch transactions, not real-time inference.AI layered on top of fragmented data produces marginal gains. AI built into the foundation produces structural ones.

RBI's Utkarsh 2029 framework (March 30, 2026) commits explicitly to scaling ULI, issuing an AI governance framework, and establishing AI and Digital sandboxes all before March 2029.

RBI FREE AI Committee's seven sutras and the DPDP Act together define what responsible AI in lending must look like: explainable decisions, bias audits, human oversight, and purpose-limited data use. These are the design constraints within which the new AI systems are to be built.

Covering six functions in this series: origination, credit, fraud, compliance, servicing, collections. Each edition of this series covers one. This edition explains why the architecture question matters before any of the functions do.

The legacy lender's advantage is distribution and trust. The AI-first lender's advantage is decisioning speed and data architecture. The winner is whoever is able to master both these aspects and scale.

01/THE STARTING POINT

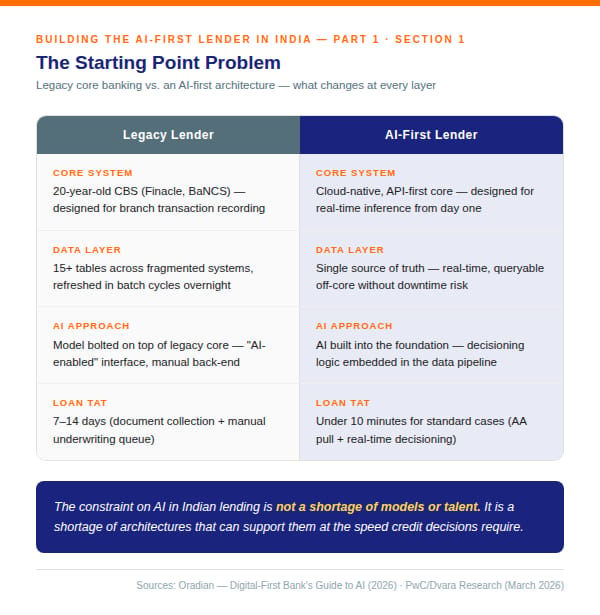

Figure 1 : Legacy vs AI first lender

The core banking systems that power India's scheduled commercial banks and a large share of its NBFCs were built for a specific job: recording branch transactions accurately. Finacle, BaNCS, and their predecessors do that job well. What they were not built for is real-time inference — pulling a live borrower's Account Aggregator cash flows, running them through a risk model, and returning a credit decision in seconds.

The standard response to this problem is integration. Build a digital front-end, connect it to the legacy core via API, layer a credit model on top, and call it AI-enabled lending. The problem is what sits underneath. When the data that feeds your AI model lives in fifteen tables across a twenty-year-old core, refreshed in batch cycles, the model is only as good as the last batch run. That is not real-time underwriting. That is batch underwriting with a better interface.

The quality and accessibility of data can make or break an AI project. Institutions that succeed with AI in financial services tend to have governed change and clear audit trails in their core systems, a stable single source of truth for transactional and customer data, and the ability to query production-grade data off-core without risking downtime.

Most Indian lenders have none of these. They have data. They do not have a data layer.

The constraint on AI in Indian lending is not a shortage of models or talent. It is a shortage of architectures that can support them at the speed credit decisions require.

02/RBI STAND ON AI

Figure 2: RBI Stand on AI

The regulatory guardrails for AI in lending are no longer a discussion paper. They are a declared agenda.

Utkarsh 2029 explicitly commits RBI to "developing frameworks for use of emerging technologies such as AI and Quantum in our financial sector." It also commits to "deploying technology-led supervisory tools" and "developing an indigenous AI tool based on a purpose-built LLM." Read that last one carefully. The regulator is building its own AI surveillance capacity. A lender whose models cannot be explained to an AI-powered supervisory system is not just a governance risk ‚it is a supervisory risk.

The FREE AI Committee's seven sutras define what responsible AI in digital lending must look like.

03/DATA INFRASTRUCTURE

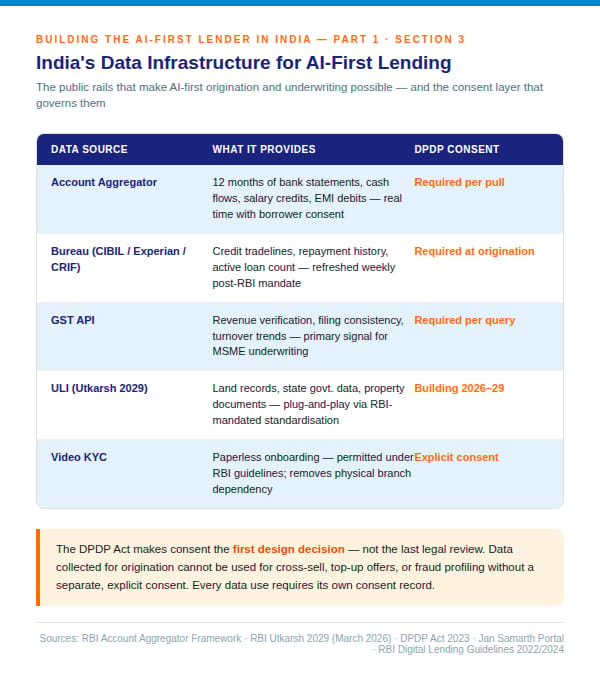

Figure 3: Data Structure in place for AI based lending

The good news is that India's public data infrastructure has improved faster than most lenders' internal architectures.

The Account Aggregator network is the foundational layer. With borrower consent, a lender can pull upto 12 months of bank statement data from any FIP in real time cash flows, salary credits, EMI debits, GST-linked transaction patterns. This is richer data than any document collection process could produce, and it arrives in seconds rather than days. (The operative phrase is "with borrower consent". DPDP Act means the consent architecture for what data you pull, for what purpose, is not a legal formality. It is the first design decision in your origination stack.)

The Unified Lending Interface, which Utkarsh 2029 commits to scaling, extends this further. ULI is designed to enable lenders to access consented data from diverse sources, land records for agricultural lending, property documents, state government data ‚ through standardised plug-and-play connectivity. RBI's explicit commitment to ULI scaling means that the data infrastructure for AI-first origination is being built at the national level. The question is whether your lending architecture can consume it.

Bureau data has also improved. RBI's weekly reporting mandate has made bureau data more current. CIBIL, Experian, CRIF, and Equifax now offer more granular tradeline data, and the credit information ecosystem has deepened since the MSME lending push post-2020. GST filing data, accessible via the Jan Samarth portal's digital credit assessment model, gives lenders a real-time revenue verification signal for MSME borrowers that no salary slip can match.

The data is there. The architecture to use it is the gap.

04/WHO IS BUILDING AI FIRST?

The honest answer is: no one is fully AI-first yet. But the spectrum is wide.

At one end sit the major scheduled commercial banks. Their CBS infrastructure dates back 15 to 25 years in most cases. AI initiatives exist ‚ most have launched some version of a credit model or a fraud detection overlay ‚ but they sit on top of the legacy core, not inside it. The data lake is separate from the transaction system. The model is separate from the decisioning engine. Integration points multiply, latency creeps in, and the "AI-powered" loan takes 48 hours because the data refresh cycle runs overnight.

At the other end sit a handful of cloud-native NBFCs and digital lenders that built their technology stack after 2018 ‚after the Account Aggregator framework was conceptualised, after UPI had proven that real-time data infrastructure was viable at scale. Navi, Kreditbee, Axio, and the post-merger Slice entity have all built on cloud infrastructure with API-first cores. Their origination is faster. Their underwriting models refresh more frequently. Their servicing costs are lower. They are not fully AI-first ‚no lender in India is ‚ but they are materially closer to the architecture this series describes. Amongst the older NBFCs Bajaj Finance, L&T Finance have made major investments in AI esp. voice AI. They are realising significant cost benefits, and have also invested in core technology and analytics. The results of which are still not fully reported yet.

In between sits the vast majority: NBFCs and co-lending partnerships that have digitised their front-end without rebuilding their back-end. The application journey is smooth. The underwriting still runs on a spreadsheet that a credit officer fills manually.

The Oradian whitepaper “The Digital First Bank’s guide to AI has a useful AI readiness diagnostic for this middle segment. The questions are straightforward :

Can you access detailed transactional data going back 12 to 24 months without disrupting the live core?

Can you add new decision logic into existing customer journeys without raising a vendor ticket?

Can you explain, in simple terms, why a customer was approved or declined by your AI system?

Most Indian lenders cannot answer YES to all three.

The AI-first lender is not the one that has the best model. It is the one that has the architecture to run any model it needs, retrain it on live portfolio data, and explain every output.

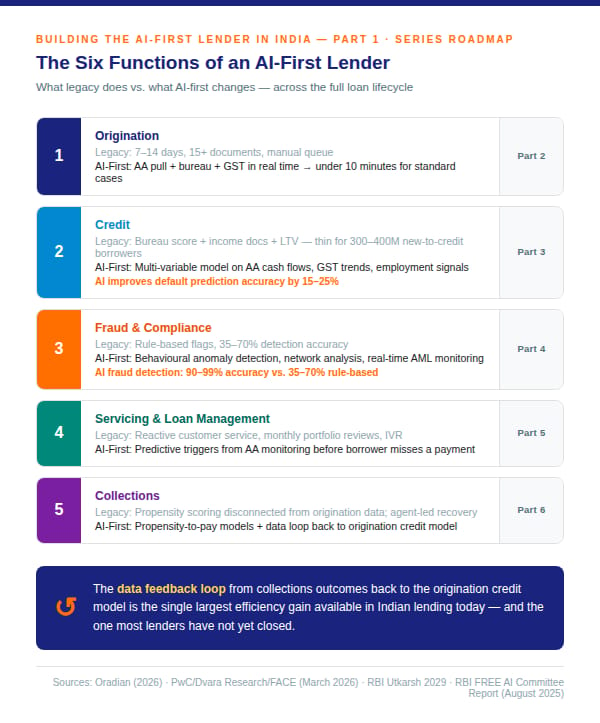

05/AI USAGE ACROSS SIX FUNCTIONS

An AI-first lender is not one system. It is six functions redesigned from the foundation up.

Origination: From document collection to real-time data pull via Account Aggregator, bureau, and GST. From 7 to 14 days to under 10 minutes for standard cases.

Credit: From a scorecard built on bureau score and income documents to a multi-variable model running on AA cash flows, GST trends, and employment signals. The new-to-credit borrower‚estimated at 300 to 400 million Indians ‚ is the structural opportunity that only alternative data underwriting can unlock.

Fraud: From rule-based flags to behavioral anomaly detection at application and transaction level. AI fraud detection systems achieve 90 to 99% accuracy against 35 to 70% for traditional rule-based systems. The architecture to get there requires data that most Indian lenders do not yet have in one place.

Compliance: From periodic manual sampling to automated surveillance and audit logging across the model development lifecycle. Utkarsh 2029's commitment to technology-led supervisory tools makes this function more urgent, not less.

Servicing: From reactive customer service and monthly portfolio reviews to predictive triggers before the borrower calls. The early warning signal ‚ a 30-day decline in AA cash flows, a missed GST filing, a salary credit that did not appear ‚ is visible in real time if your data layer is built for it.

Collections: The function where every upstream design decision pays off or compounds into loss. The data feedback loop from collections outcomes back to the origination model is the single largest efficiency gain available in Indian lending today ‚ and the one most lenders have not closed.

Figure 5: AI usage across key lending functions

END NOTE

This series is a design exercise, it is not a prediction.

The question is not whether AI will reshape Indian lending. Utkarsh 2029 has answered that. RBI is building the regulatory infrastructure, the data infrastructure, and its own supervisory AI tools across the next three years. The digital lending market is projected to reach USD 1.3 trillion by 2030. AI is needed to fuel this 500% expansion from current levels.

The question is what you build when you have no legacy to protect. What does the origination stack look like when you design the data layer first? What does the credit model look like when it is trained on AA cash flows rather than ITR documents? What does compliance look like when the audit trail is built in, not bolted on?

Those are architecture questions. And they do have answers.